AI Sweden Preps Region’s First Language Model

[ad_1]

If the King of Sweden wishes assistance drafting his once-a-year Christmas speech this 12 months, he could check with the identical AI model that’s readily available to his 10 million subjects.

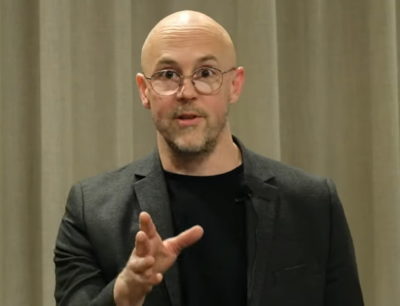

As a exam, scientists prompted the product, named GPT-SW3, to draft one particular of the royal messages, and it did a rather good occupation, in accordance to Magnus Sahlgren, who heads analysis in natural language knowledge at AI Sweden, a consortium kickstarting the country’s journey into the equipment finding out period.

“Later, our minister of digitalization visited us and requested the design to crank out arguments for political positions and it came up with some definitely clever kinds — and he intuitively comprehended how to prompt the design to make excellent text,” Sahlgren mentioned.

Early successes encouraged perform on an even more substantial and much more highly effective model of the language model they hope will serve any citizen, business or authorities company in Scandinavia.

A Multilingual Model

The latest model packs 3.6 billion parameters and is smart adequate to do a few awesome factors in Swedish. Sahlgren’s crew aims to educate a condition-of-the-art design with a whopping 175 billion parameters that can deal with all sorts of language duties in the Nordic languages of Swedish, Danish, Norwegian and, it hopes, Icelandic, much too.

For case in point, a startup can use it to mechanically create product descriptions for an e-commerce web-site supplied only the products’ names. Authorities companies can use it to promptly classify and route questions from citizens.

Firms can ask it to promptly summarize studies so they can react rapidly. Hospitals can run distilled variations of the design privately on their have devices to enhance patient care.

“It’s a foundational model we will present as a provider for whatsoever duties individuals want to clear up,” said Sahlgren, who’s been performing at the intersection of language and equipment learning because he earned his Ph.D. in computational linguistics in 2006.

Authorization to Discuss Freely

It is a ability significantly witnessed as a strategic asset, a keystone of electronic sovereignty in a planet that speaks thousands of languages throughout almost 200 nations.

Most language solutions these days focus on Chinese or English, the world’s two most-spoken tongues. They are ordinarily established in China or the U.S., and they are not free.

“It’s important for us to have models developed in Sweden for Sweden,” Sahlgren claimed.

Tiny Staff, Tremendous Procedure

“We’re a small region and a main workforce of about six people, nonetheless we can develop a point out-of-the-art resource like this for people to use,” he included.

That is since Sweden has a highly effective engine in BerzeLiUs, a 300-petaflops AI supercomputer at Linköping College. It educated the original GPT-SW3 model utilizing just 16 of the 60 nodes in the NVIDIA DGX SuperPOD.

The following design may perhaps exercising all the system’s nodes. This sort of tremendous-sized jobs call for super program like the NVIDIA NeMo Megatron framework.

“It allows us scale our coaching up to the whole supercomputer, and we have been lucky adequate to have access to specialists in the NeMo progress crew — devoid of NVIDIA it would have been so much far more challenging to occur this much,” he reported.

A Workflow for Any Language

NVIDIA’s engineers created a recipe based mostly on NeMo and an rising approach called p-tuning that optimizes huge versions quickly, and it’s geared to perform with any language.

In a single early test, a design nearly doubled its accuracy following NVIDIA engineers used the strategies.

What’s more, it requires one-tenth the information, slashing the need for tens of thousands of hand-labeled data. That opens the doorway for buyers to good-tune a design with the fairly smaller, business-certain datasets they have at hand.

“We hope to inspire a good deal of entrepreneurship in sector, startups and the community making use of our technology to develop their have apps and providers,” mentioned Sahlgren.

Writing the Up coming Chapter

Meanwhile, NVIDIA’s builders are currently working on techniques to make the enabling computer software much better.

A single exam reveals wonderful promise for schooling new abilities employing broadly offered English datasets into products created for any language. In another effort and hard work, they’re working with the p-tuning strategies in inference employment so designs can discover on the fly.

Zenodia Charpy, a senior methods architect at NVIDIA dependent in Gothenburg, shares the enthusiasm of the AI Sweden staff she supports. “We’ve only just begun hoping new and superior strategies to deal with these significant language difficulties — there’s significantly a lot more to appear,” she explained.

The GPT-SW3 model will be built available by the conclude of calendar year through an early obtain plan. To apply, contact [email protected].

[ad_2]

Source connection